CoMA

Generating 3D faces using Convolutional Mesh Autoencoders

Anurag Ranjan, Timo Bolkart, Soubhik Sanyal, and Michael J. Black

European Conference on Computer Vision (ECCV) 2018, Munich, Germany

Abstract

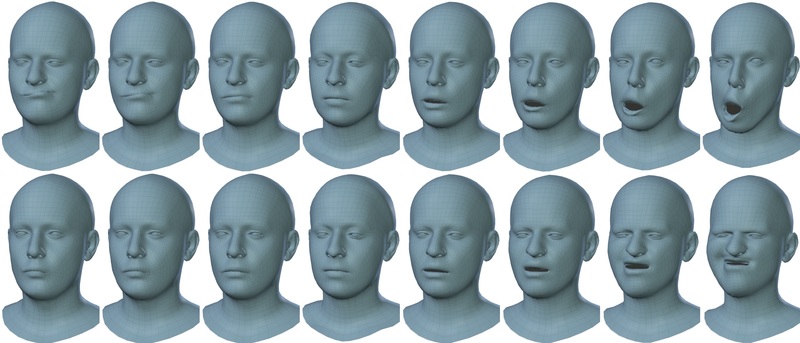

Learned 3D representations of human faces are useful for computer vision problems such as 3D face tracking and reconstruction from images, as well as graphics applications such as character generation and animation. Traditional models learn a latent representation of a face using linear subspaces or higher-order tensor generalizations. Due to this linearity, they can not capture extreme deformations and non-linear expressions. To address this, we introduce a versatile model that learns a non-linear representation of a face using spectral convolutions on a mesh surface. We introduce mesh sampling operations that enable a hierarchical mesh representation that captures non-linear variations in shape and expression at multiple scales within the model. In a variational setting, our model samples diverse realistic 3D faces from a multivariate Gaussian distribution. Our training data consists of 20,466 meshes of extreme expressions captured over 12 different subjects. Despite limited training data, our trained model outperforms state-of-the-art face models with 50% lower reconstruction error, while using 75% fewer parameters. We also show that, replacing the expression space of an existing state-of-the-art face model with our autoencoder, achieves a lower reconstruction error.

More Information

- Please sign up and agree to the license for access to the model or the data

- pdf preprint

- supplemental

- tensorflow code

- pytorch code

- COMA Project page at MPI:IS

- For questions, please contact coma@tue.mpg.de

Referencing the Dataset

Here are the Bibtex snippets for citing COMA in your work.

@inproceedings{COMA:ECCV18, title = {Generating {3D} faces using Convolutional Mesh Autoencoders}, author = {Ranjan, Anurag and Bolkart, Timo and Sanyal, Soubhik and Black, Michael J.}, booktitle = {European Conference on Computer Vision (ECCV)}, pages = {725--741}, year = {2018} url = {http://coma.is.tue.mpg.de/} }